Unity基于自定义管线实现体积光—光线步进(RayMarch)算法

本文在Catlike Coding 实现的基础上参考了知乎文章增加了后期处理体积光功能,并给出相关步骤。

目录

一、简介

本文在Catlike Coding 实现的【Custom SRP 2.5.0】基础上参考了知乎文章 【 Volumetric Light 体积光】增加了后期处理体积光功能,并给出相关步骤。

二,环境

Unity :2022.3.18f1

CRP Library :14.0.10

URP基本结构 : Custom SRP 2.5.0

三 ,实现简要步骤

这里不细讲光线步进算法实现体积光的原理,只讲述简要的步骤。

在后期处理的时候,我们需要在渲染每一个屏幕上的像素时,从摄像机位置出发到像素所对应的物体表面对应的世界坐标为止,逐步向前推进,每推进一次都将当前位置照射到的光照强度做一次累加,然后我们能得到一张累加光照强度的贴图,这就是体积光的贴图。应用时我们只需要将这张贴图叠加到最后输出到屏幕的结果即可。

为了实现这个操作,我们需要知道当前像素所对应的游戏世界坐标,以及每个点位对应的光照强度。

四,实现过程

1,设置基本结构

1.1 CPU层面

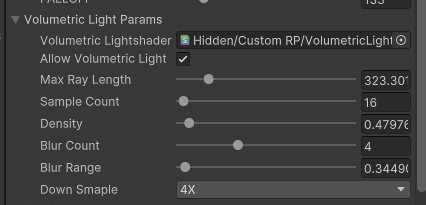

添加VolumetricLightParams类,用作体积光调整参数

[Serializable]

public class VolumetricLightParams

{

[SerializeField]

Shader VolumetricLightshader = default;

[System.NonSerialized]

Material VolumetricLightmaterial;

public Material VolumetricLightMaterial

{

get

{

if (VolumetricLightmaterial == null && VolumetricLightshader != null)

{

VolumetricLightmaterial = new Material(VolumetricLightshader);

VolumetricLightmaterial.hideFlags = HideFlags.HideAndDontSave;

}

return VolumetricLightmaterial;

}

}

public bool allowVolumetricLight;

[Range(0.0f, 2000f)]

public float MaxRayLength = 20f;

[Range(1, 1024)]

public int SampleCount = 8;

[Range(0, 10f)]

public float Density = 0.1f;

[Range(1, 10)]

public int BlurCount = 1;

[Range(0.1f, 10f)]

public float BlurRange = 0.11f;

[SerializeField]

public enum DownSmapleValue { None = 1,_2X = 2, _4X = 4, _8X = 8, _16X = 16 }

[SerializeField]

public DownSmapleValue DownSmaple = DownSmapleValue.None;

}

在PostFXSettings中添加引用

public class PostFXSettings : ScriptableObject

{

.....................

public VolumetricLightParams volumetricLightParams = default;

.....................

}添加VolumetricLightPass.cs

using UnityEngine.Experimental.Rendering.RenderGraphModule;

using UnityEngine.Rendering;

using UnityEngine;

using UnityEngine.Experimental.Rendering;

public class VolumetricLightPass

{

static readonly ProfilingSampler sampler = new("VolumetricLightPass");

static int

_VolumetricLightTexture = Shader.PropertyToID("_VolumetricLightTexture");

TextureHandle VolumetricLightAttachmentHandle;

VolumetricLightParams volumetricLightParams;

Camera camera;

ShadowSettings settings;

enum VolumetricLightPassEnum

{

VolumetricLightPass,

}

void Render(RenderGraphContext context)

{

CommandBuffer buffer = context.cmd;

buffer.SetRenderTarget(VolumetricLightAttachmentHandle, RenderBufferLoadAction.DontCare, RenderBufferStoreAction.Store);

buffer.DrawProcedural(Matrix4x4.identity, volumetricLightParams.VolumetricLightMaterial, (int)VolumetricLightPassEnum.VolumetricLightPass, MeshTopology.Triangles, 3);

context.renderContext.ExecuteCommandBuffer(buffer);

buffer.Clear();

}

public static void Record(RenderGraph renderGraph, Camera camera, VolumetricLightParams volumetricLightParams, Vector2Int BufferSize,

in CameraRendererTextures textures,

in LightResources lightData, ShadowSettings shadowSettings)

{

using RenderGraphBuilder builder = renderGraph.AddRenderPass(sampler.name, out VolumetricLightPass pass, sampler);

var desc = new TextureDesc(BufferSize / (int)volumetricLightParams.DownSmaple)

{

colorFormat = GraphicsFormat.R8G8B8A8_SRGB,

name = "VolumetricLightPass"

};

pass.VolumetricLightAttachmentHandle = builder.CreateTransientTexture(desc);

pass.volumetricLightParams = volumetricLightParams;

pass.camera = camera;

pass.settings = shadowSettings;

builder.SetRenderFunc<VolumetricLightPass>(

static (pass, context) => pass.Render(context));

}

}

在CameraRenderer.cs 中添加Pass的调用

public partial class CameraRenderer

{

..........

public void Render(RenderGraph renderGraph, ScriptableRenderContext context, Camera camera, CameraBufferSettings bufferSettings, bool useLightsPerObject, ShadowSettings shadowSettings, PostFXSettings postFXSettings,int colorLUTResolution)

{

..........

using (renderGraph.RecordAndExecute(renderGraphParameters))

{

..............

UnsupportedShadersPass.Record(renderGraph, camera, cullingResults);

if (postFXSettings.volumetricLightParams.allowVolumetricLight)

VolumetricLightPass.Record(renderGraph, camera, postFXSettings.volumetricLightParams, bufferSize, textures, lightResources, shadowSettings);

..................

}

}

}1.2 shader层面

创建VolumetricLight.hlsl

#ifndef VOLUMETRICLIGHT_INCLUDED

#define VOLUMETRICLIGHT_INCLUDED

struct Varyings {

float4 positionCS_SS : SV_POSITION;

float2 screenUV : VAR_SCREEN_UV;

};

Varyings DefaultPassVertex(uint vertexID : SV_VertexID) {

Varyings output;

output.positionCS_SS = float4(

vertexID <= 1 ? -1.0 : 3.0,

vertexID == 1 ? 3.0 : -1.0,

0.0, 1.0

);

output.screenUV = float2(

vertexID <= 1 ? 0.0 : 2.0,

vertexID == 1 ? 2.0 : 0.0

);

if (_ProjectionParams.x < 0.0) {

output.screenUV.y = 1.0 - output.screenUV.y;

}

return output;

}

half4 VolumetricLightFragment(Varyings input) : SV_TARGET{

return 0;

}

#endif创建VolumetricLight.shader

Shader "Hidden/Custom RP/VolumetricLight"

{

SubShader{

HLSLINCLUDE

#include "../ShaderLibrary/Common.hlsl"

#include "../ShaderLibrary/VolumetricLight.hlsl"

ENDHLSL

Pass{

Name "VolumetricLight"

Cull Off

ZTest Always

ZWrite Off

HLSLPROGRAM

#pragma vertex DefaultPassVertex

#pragma fragment VolumetricLightFragment

ENDHLSL

}

}

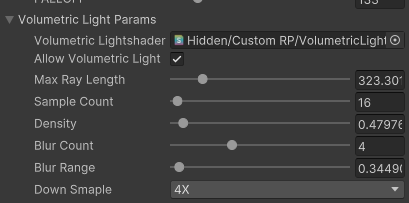

}关联以及参数设置

在参数设置中绑定好Shader

2, 获取当前像素所对应物体表面的游戏世界坐标

这里使用【射线法】来获取世界坐标

2.1 CPU层面

将近裁切面的坐标传入到GPU里,后面通过深度图,摄像机坐标,近裁切面坐标计算像素对应表面的世界坐标。

public class VolumetricLightPass

{

.........................

static int

ProjectionParams2 = Shader.PropertyToID("_ProjectionParams2"),

CameraViewTopLeftCorner = Shader.PropertyToID("_CameraViewTopLeftCorner"),

CameraViewXExtent = Shader.PropertyToID("_CameraViewXExtent"),

CameraViewYExtent = Shader.PropertyToID("_CameraViewYExtent"),

_VolumetricLightTexture = Shader.PropertyToID("_VolumetricLightTexture");

........................

void SetCameraParams(CommandBuffer buffer)

{

Matrix4x4 view = camera.worldToCameraMatrix;

Matrix4x4 proj = camera.projectionMatrix;

// 将camera view space 的平移置为0,用来计算world space下相对于相机的vector

Matrix4x4 cview = view;

cview.SetColumn(3, new Vector4(0.0f, 0.0f, 0.0f, 1.0f));

Matrix4x4 cviewProj = proj * cview;

// 计算viewProj逆矩阵,即从裁剪空间变换到世界空间

Matrix4x4 cviewProjInv = cviewProj.inverse;

// 计算世界空间下,近平面四个角的坐标

var near = camera.nearClipPlane;

Vector4 topLeftCorner = cviewProjInv.MultiplyPoint(new Vector4(-1.0f, 1.0f, -1.0f, 1.0f));

Vector4 topRightCorner = cviewProjInv.MultiplyPoint(new Vector4(1.0f, 1.0f, -1.0f, 1.0f));

Vector4 bottomLeftCorner = cviewProjInv.MultiplyPoint(new Vector4(-1.0f, -1.0f, -1.0f, 1.0f));

// 计算相机近平面上方向向量

Vector4 cameraXExtent = topRightCorner - topLeftCorner;

Vector4 cameraYExtent = bottomLeftCorner - topLeftCorner;

//设置参数

buffer.SetGlobalVector(CameraViewTopLeftCorner, topLeftCorner);

buffer.SetGlobalVector(CameraViewXExtent, cameraXExtent);

buffer.SetGlobalVector(CameraViewYExtent, cameraYExtent);

buffer.SetGlobalVector(ProjectionParams2, new Vector4(1.0f / near, camera.transform.position.x, camera.transform.position.y, camera.transform.position.z));

}

void Render(RenderGraphContext context)

{

CommandBuffer buffer = context.cmd;

SetCameraParams(buffer);

buffer.SetRenderTarget(VolumetricLightAttachmentHandle, RenderBufferLoadAction.DontCare, RenderBufferStoreAction.Store);

buffer.DrawProcedural(Matrix4x4.identity, volumetricLightParams.VolumetricLightMaterial, (int)VolumetricLightPassEnum.VolumetricLightPass, MeshTopology.Triangles, 3);

context.renderContext.ExecuteCommandBuffer(buffer);

buffer.Clear();

}

public static void Record(RenderGraph renderGraph, Camera camera, VolumetricLightParams volumetricLightParams, Vector2Int BufferSize,

in CameraRendererTextures textures,

in LightResources lightData, ShadowSettings shadowSettings)

{

..................

//读取深度图

builder.ReadTexture(textures.depthCopy);

builder.SetRenderFunc<VolumetricLightPass>(

static (pass, context) => pass.Render(context));

}

}2.2 shader层面

在Common.hlsl 中添加如下代码(位置随意,只要能调用到就行)

float4 _ProjectionParams2;

float4 _CameraViewTopLeftCorner;

float4 _CameraViewXExtent;

float4 _CameraViewYExtent;

// 根据线性深度值和屏幕UV,还原世界空间下,相机到顶点的位置偏移向量

half3 ReconstructViewPos(float2 uv, float linearEyeDepth) {

// Screen is y-inverted

uv.y = 1.0 - uv.y;

float zScale = linearEyeDepth * _ProjectionParams2.x; // divide by near plane

float3 viewPos = _CameraViewTopLeftCorner.xyz + _CameraViewXExtent.xyz * uv.x + _CameraViewYExtent.xyz * uv.y;

viewPos *= zScale;

return viewPos;

}

在VolumetricLight.hlsl 中添加代码

half4 VolumetricLightFragment(Varyings input) : SV_TARGET{

//采样深度

float depth = SAMPLE_DEPTH_TEXTURE_LOD(_CameraDepthTexture, sampler_point_clamp, input.screenUV, 0);

depth = IsOrthographicCamera() ? OrthographicDepthBufferToLinear(depth) : LinearEyeDepth(depth, _ZBufferParams);

float3 wpos = _WorldSpaceCameraPos + ReconstructViewPos(input.screenUV, depth);

return float4(wpos ,1);

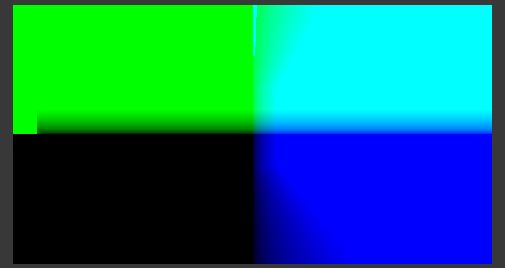

}2.3 效果

这个时候输出的应该是类似这种四色方框样子,根据摄像机位置不同,输出的色块也不同

3,生成体积光贴图

这里采用的是光线进步的算法生成的,具体原理可以参考这几篇文章

【URP | 后处理 - 体积光 Volumetric Lighting】

3.1 CPU层面

在步进过程中获取光照强度是通过阴影贴图获取,所以需要配置相关宏定义

public class VolumetricLightPass

{

.........................

static readonly GlobalKeyword[] directionalFilterKeywords = {

GlobalKeyword.Create("_DIRECTIONAL_PCF3"),

GlobalKeyword.Create("_DIRECTIONAL_PCF5"),

GlobalKeyword.Create("_DIRECTIONAL_PCF7"),

};

static readonly GlobalKeyword[] otherFilterKeywords = {

GlobalKeyword.Create("_OTHER_PCF3"),

GlobalKeyword.Create("_OTHER_PCF5"),

GlobalKeyword.Create("_OTHER_PCF7"),

};

static readonly GlobalKeyword[] cascadeBlendKeywords = {

GlobalKeyword.Create("_CASCADE_BLEND_SOFT"),

GlobalKeyword.Create("_CASCADE_BLEND_DITHER"),

};

static int

ProjectionParams2 = Shader.PropertyToID("_ProjectionParams2"),

CameraViewTopLeftCorner = Shader.PropertyToID("_CameraViewTopLeftCorner"),

CameraViewXExtent = Shader.PropertyToID("_CameraViewXExtent"),

CameraViewYExtent = Shader.PropertyToID("_CameraViewYExtent"),

_SampleCount = Shader.PropertyToID("_SampleCount"),

_Density = Shader.PropertyToID("_Density"),

_MaxRayLength = Shader.PropertyToID("_MaxRayLength"),

_RandomNumber = Shader.PropertyToID("_RandomNumber"),

_VolumetricLightTexture = Shader.PropertyToID("_VolumetricLightTexture");

void SetKeywords(CommandBuffer buffer,GlobalKeyword[] keywords, int enabledIndex)

{

for (int i = 0; i < keywords.Length; i++)

{

buffer.SetKeyword(keywords[i], i == enabledIndex);

}

}

void Render(RenderGraphContext context)

{

CommandBuffer buffer = context.cmd;

SetCameraParams(buffer);

SetKeywords(buffer,directionalFilterKeywords, (int)settings.directional.filter - 1);

SetKeywords(buffer, cascadeBlendKeywords, (int)settings.directional.cascadeBlend - 1);

SetKeywords(buffer, otherFilterKeywords, (int)settings.other.filter - 1);

buffer.SetGlobalInt(_SampleCount, volumetricLightParams.SampleCount);

buffer.SetGlobalFloat(_Density, volumetricLightParams.Density);

buffer.SetGlobalFloat(_MaxRayLength, volumetricLightParams.MaxRayLength);

buffer.SetGlobalFloat(_RandomNumber, Random.Range(0.0f, 1.0f));

buffer.SetRenderTarget(VolumetricLightAttachmentHandle, RenderBufferLoadAction.DontCare, RenderBufferStoreAction.Store);

buffer.DrawProcedural(Matrix4x4.identity, volumetricLightParams.VolumetricLightMaterial, (int)VolumetricLightPassEnum.VolumetricLightPass, MeshTopology.Triangles, 3);

.........................

}

public static void Record(RenderGraph renderGraph, Camera camera, VolumetricLightParams volumetricLightParams, Vector2Int BufferSize,

in CameraRendererTextures textures,

in LightResources lightData, ShadowSettings shadowSettings)

{

.......................

builder.ReadTexture(textures.depthCopy);

//读取光照以及阴影信息

builder.ReadComputeBuffer(lightData.directionalLightDataBuffer);

builder.ReadComputeBuffer(lightData.otherLightDataBuffer);

builder.ReadTexture(lightData.shadowResources.directionalAtlas);

builder.ReadTexture(lightData.shadowResources.otherAtlas);

builder.ReadComputeBuffer(lightData.shadowResources.directionalShadowCascadesBuffer);

builder.ReadComputeBuffer(lightData.shadowResources.directionalShadowMatricesBuffer);

builder.ReadComputeBuffer(lightData.shadowResources.otherShadowDataBuffer);

.......................

}

}3.2 shader层面

修改VolumetricLight.shader

Pass{

Name "VolumetricLight"

Cull Off

ZTest Always

ZWrite Off

HLSLPROGRAM

#pragma multi_compile _ _OTHER_PCF3 _OTHER_PCF5 _OTHER_PCF7

#pragma multi_compile _ _DIRECTIONAL_PCF3 _DIRECTIONAL_PCF5 _DIRECTIONAL_PCF7

#pragma vertex DefaultPassVertex

#pragma fragment VolumetricLightFragment

ENDHLSL

}修改VolumetricLight.hlsl

#ifndef VOLUMETRICLIGHT_INCLUDED

#define VOLUMETRICLIGHT_INCLUDED

#include "Surface.hlsl"

#include "Shadows.hlsl"

#include "Light.hlsl"

#define random(seed) sin(seed * 641.5467987313875 + 1.943856175)

int _SampleCount;

float _Density;

float _MaxRayLength;

float _RandomNumber;

....................

//获取平行光光照的强度

float GetDirLightAttenuation(DirectionalLightData Lightdata,float3 wpos)

{

float atten = 1;

int i;

for (i = 0; i < _CascadeCount; i++) {

DirectionalShadowCascade cascade = _DirectionalShadowCascades[i];

float distanceSqr = DistanceSquared(wpos, cascade.cullingSphere.xyz);

if (distanceSqr < cascade.cullingSphere.w) {

break;

}

}

float3 positionSTS = mul(

_DirectionalShadowMatrices[Lightdata.shadowData.y + i],

float4(wpos, 1.0)

).xyz;

atten = FilterDirectionalShadow(positionSTS);

return atten;

}

//获取其他类型光照的强度

float GetOtherLightAttenuation(OtherLightData Lightdata, float3 wpos)

{

float atten = 0.1;

float tileIndex = Lightdata.shadowData.y;

float3 position = Lightdata.position.xyz;

float3 ray = position - wpos;

float3 ratDir = normalize(ray);

//IsPoint

if (Lightdata.shadowData.z == 1.0) {

float faceOffset = CubeMapFaceID(-ratDir);

tileIndex += faceOffset;

}

OtherShadowBufferData data = _OtherShadowData[tileIndex];

float distanceSqr = max(dot(ray, ray), 0.00001);

float rangeAttenuation = Square2(saturate(1.0 - Square2(distanceSqr * Lightdata.position.w)));

float3 spotDirection = Lightdata.directionAndMask.xyz;

float spotAttenuation = Square2(saturate(dot(spotDirection, ratDir) * Lightdata.spotAngle.x + Lightdata.spotAngle.y));

float4 positionSTS = mul(data.shadowMatrix, float4(wpos, 1.0));

atten = FilterOtherShadow(positionSTS.xyz / positionSTS.w, data.tileData.xyz) * spotAttenuation * rangeAttenuation / distanceSqr;

return atten;

}

half4 RayMarch(float3 worldPos,float2 uv)

{

float3 startPos = _WorldSpaceCameraPos; //摄像机上的世界坐标

float3 dir = normalize(worldPos - startPos); //视线方向

float rayLength = length(worldPos - startPos); //视线长度

rayLength = min(rayLength, _MaxRayLength); //限制最大步进长度,_MaxRayLength这里设置为20

float3 final = startPos + dir * rayLength; //定义步进结束点

float2 step = 1.0 / _SampleCount; //定义单次插值大小,_SampleCount为步进次数

step.y *= 0.4;

float seed = random((_ScreenParams.y * uv.y + uv.x) * _ScreenParams.x + _RandomNumber);

half3 intensity = 0; //累计光强

for (int j = 0; j < GetDirectionalLightCount(); j++) {

DirectionalLightData Lightdata = _DirectionalLightData[j];

half3 tempintensity = 0;

for (float i = 0; i < 1; i += step) //光线步进

{

seed = random(seed);

float3 currentPosition = lerp(startPos, final, i + seed * step.y); //当前世界坐标

float atten = GetDirLightAttenuation(Lightdata, currentPosition) * _Density; //阴影采样,_Density为强度因子

float3 light = atten;

tempintensity += light;

}

intensity += (tempintensity * Lightdata.color /_SampleCount) ;

}

for (int j = 0; j < GetOtherLightCount(); j++) {

OtherLightData Lightdata = _OtherLightData[j];

half3 tempintensity = 0;

for (float i = 0; i < 1; i += step) //光线步进

{

seed = random(seed);

float3 currentPosition = lerp(startPos, final, i + seed * step.y);

float atten = GetOtherLightAttenuation(Lightdata, currentPosition) * _Density;

float3 light = atten;

tempintensity += light;

}

intensity += (tempintensity * Lightdata.color / _SampleCount);

}

int lightCount = GetDirectionalLightCount() + GetOtherLightCount();

intensity /= lightCount;

return half4(intensity, 1); //查看结果

}

half4 VolumetricLightFragment(Varyings input) : SV_TARGET{

//采样深度

float depth = SAMPLE_DEPTH_TEXTURE_LOD(_CameraDepthTexture, sampler_point_clamp, input.screenUV, 0);

depth = IsOrthographicCamera() ? OrthographicDepthBufferToLinear(depth) : LinearEyeDepth(depth, _ZBufferParams);

float3 wpos = _WorldSpaceCameraPos + ReconstructViewPos(input.screenUV, depth);

return RayMarch(wpos, input.screenUV);

}

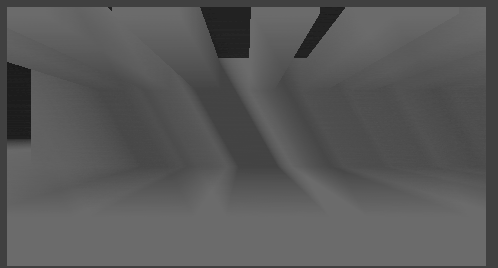

#endif3.3 效果

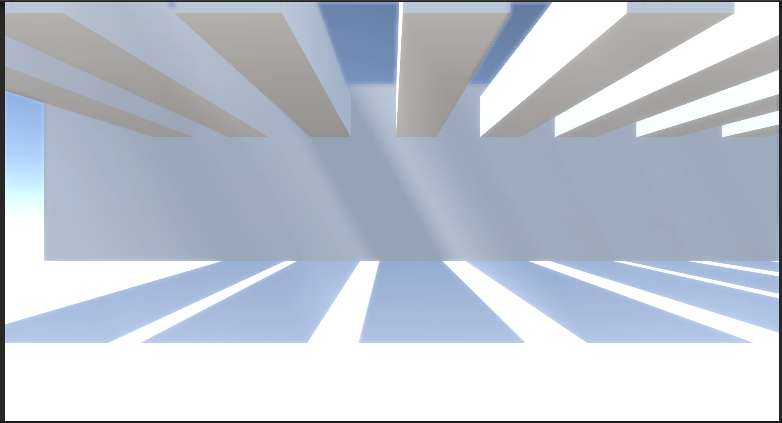

这个时候你应该会获得一张有层次的灰度图,这就是后面要用的体积光贴图,做到这一步功能基本就实现了

原图

4,应用体积光贴图

4.1 CPU层面

调整VolumetricLightPass.cs

这里主要是读取当前的颜色缓存用来后面的混合

public class VolumetricLightPass

{

...........

static int

_VolumetricLightTexture = Shader.PropertyToID("_VolumetricLightTexture");

TextureHandle colorAttachment;

..........

enum VolumetricLightPassEnum

{

VolumetricLightPass,

Final,

}

void Render(RenderGraphContext context)

{

...........

buffer.SetGlobalTexture(_VolumetricLightTexture, VolumetricLightAttachmentHandle);

buffer.SetRenderTarget(colorAttachment, RenderBufferLoadAction.DontCare, RenderBufferStoreAction.Store);

buffer.DrawProcedural(Matrix4x4.identity, volumetricLightParams.VolumetricLightMaterial, (int)VolumetricLightPassEnum.Final, MeshTopology.Triangles, 3);

context.renderContext.ExecuteCommandBuffer(buffer);

buffer.Clear();

}

public static void Record(RenderGraph renderGraph, Camera camera, VolumetricLightParams volumetricLightParams, Vector2Int BufferSize,

in CameraRendererTextures textures,

in LightResources lightData, ShadowSettings shadowSettings)

{

...........

pass.colorAttachment = builder.ReadWriteTexture(textures.colorAttachment);

builder.SetRenderFunc<VolumetricLightPass>(

static (pass, context) => pass.Render(context));

}

}4.2 shader层面

修改VolumetricLight.shader,添加如下代码

Pass

{

ZTest Always

ZWrite Off

Cull Off

Blend One SrcAlpha, Zero One

Name "Final"

HLSLPROGRAM

#pragma vertex DefaultPassVertex

#pragma fragment frag_final

ENDHLSL

}修改VolumetricLight.hlsl

...................

#define random(seed) sin(seed * 641.5467987313875 + 1.943856175)

TEXTURE2D(_VolumetricLightTexture);

SAMPLER(sampler_VolumetricLightTexture);

.................

half4 frag_final(Varyings input) : SV_TARGET{

float4 b = SAMPLE_TEXTURE2D_LOD(_VolumetricLightTexture, sampler_VolumetricLightTexture, input.screenUV, 0);

return b;

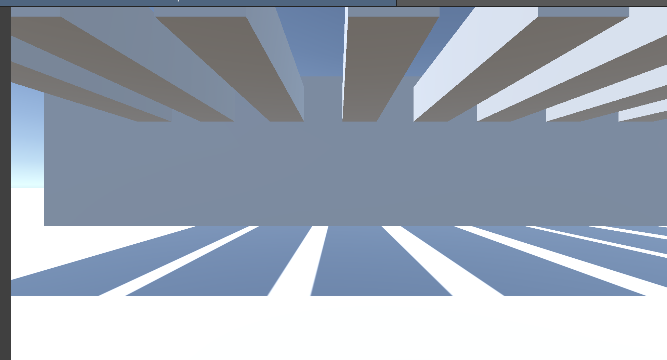

}4.3 效果

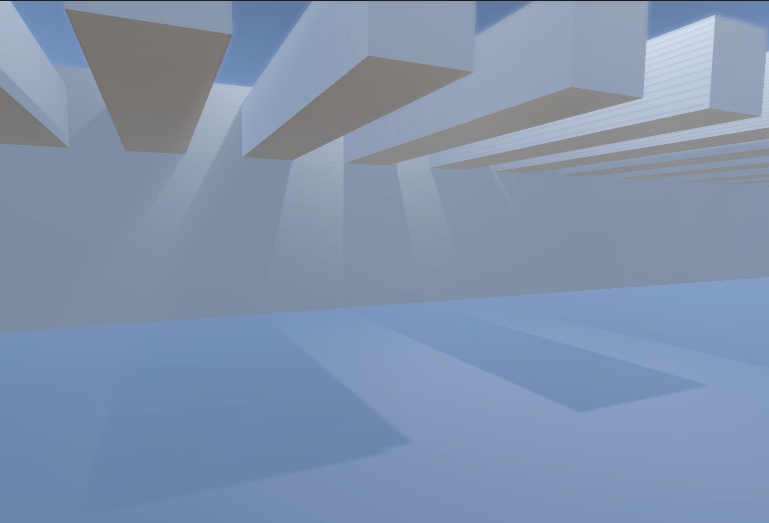

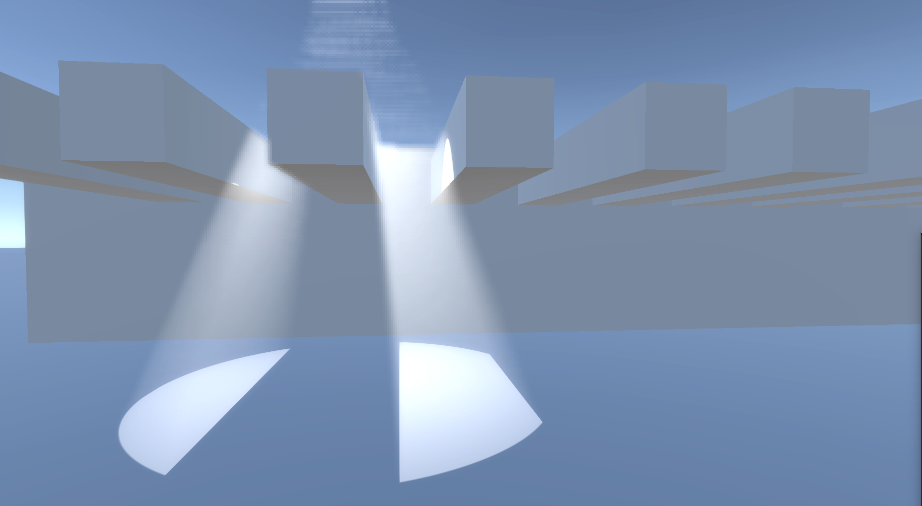

参数设置 :

方向光:

点光源: 效果不是很明显,相比于平行光需要增加一下强度

聚光灯:

5,模糊体积光贴图

添加一些模糊让效果好一些

5.1 CPU层面

using UnityEngine.Experimental.Rendering.RenderGraphModule;

using UnityEngine.Rendering;

using UnityEngine;

using UnityEngine.Experimental.Rendering;

public class VolumetricLightPass

{

...............

static int

_BlurRange = Shader.PropertyToID("_BlurRange"),

_TempBlur = Shader.PropertyToID("_TempBlur");

............

TextureHandle TempBlur,TempBlur2;

............

enum VolumetricLightPassEnum

{

VolumetricLightPass,

VolumetricLightBlur,

Final,

}

................

void Render(RenderGraphContext context)

{

..............

buffer.SetRenderTarget(VolumetricLightAttachmentHandle, RenderBufferLoadAction.DontCare, RenderBufferStoreAction.Store);

buffer.DrawProcedural(Matrix4x4.identity, volumetricLightParams.VolumetricLightMaterial, (int)VolumetricLightPassEnum.VolumetricLightPass, MeshTopology.Triangles, 3);

//Blur

buffer.SetGlobalTexture(/* _VolumetricLightTexture */_TempBlur, VolumetricLightAttachmentHandle);

for (int i = 0; i < volumetricLightParams.BlurCount; i++)

{

buffer.SetGlobalFloat(_BlurRange, (i + 1) * volumetricLightParams.BlurRange);

buffer.SetRenderTarget(TempBlur2, RenderBufferLoadAction.DontCare, RenderBufferStoreAction.Store);

buffer.DrawProcedural(Matrix4x4.identity, volumetricLightParams.VolumetricLightMaterial, (int)VolumetricLightPassEnum.VolumetricLightBlur, MeshTopology.Triangles, 3);

buffer.SetGlobalTexture(_TempBlur, TempBlur2);

var temp = TempBlur;

TempBlur = TempBlur2;

TempBlur2 = temp;

}

buffer.SetGlobalTexture(_VolumetricLightTexture, TempBlur2);

............

}

public static void Record(RenderGraph renderGraph, Camera camera, VolumetricLightParams volumetricLightParams, Vector2Int BufferSize,

in CameraRendererTextures textures,

in LightResources lightData, ShadowSettings shadowSettings)

{

using RenderGraphBuilder builder = renderGraph.AddRenderPass(sampler.name, out VolumetricLightPass pass, sampler);

var desc = new TextureDesc(BufferSize / (int)volumetricLightParams.DownSmaple)

{

colorFormat = GraphicsFormat.R8G8B8A8_SRGB,

name = "VolumetricLightPass"

};

pass.VolumetricLightAttachmentHandle = builder.CreateTransientTexture(desc);

desc.name = "TempBlur";

pass.TempBlur = builder.CreateTransientTexture(desc);

desc.name = "TempBlur2";

pass.TempBlur2 = builder.CreateTransientTexture(desc);

............

}

}

5.2 shader层面

修改VolumetricLight.shader,注意pass添加位置

Pass

{

ZTest Always

ZWrite Off

Cull Off

Name "VolumetricLight Blur"

HLSLPROGRAM

#pragma vertex DefaultPassVertex

#pragma fragment VolumetricLightBlur

ENDHLSL

}修改VolumetricLight.hlsl

#ifndef VOLUMETRICLIGHT_INCLUDED

#define VOLUMETRICLIGHT_INCLUDED

...........

TEXTURE2D(_TempBlur);

SAMPLER(sampler_TempBlur);

float4 _TempBlur_TexelSize;

...........

float _BlurRange;

...........

half4 VolumetricLightBlur(Varyings input) : SV_TARGET{

float4 tex = SAMPLE_TEXTURE2D_LOD(_TempBlur, sampler_TempBlur, input.screenUV, 0);

tex += SAMPLE_TEXTURE2D_LOD(_TempBlur, sampler_TempBlur, input.screenUV + float2(-1, -1) * _TempBlur_TexelSize.xy * _BlurRange, 0);

tex += SAMPLE_TEXTURE2D_LOD(_TempBlur, sampler_TempBlur, input.screenUV + float2(1, -1) * _TempBlur_TexelSize.xy * _BlurRange, 0);

tex += SAMPLE_TEXTURE2D_LOD(_TempBlur, sampler_TempBlur, input.screenUV + float2(-1, 1) * _TempBlur_TexelSize.xy * _BlurRange, 0);

tex += SAMPLE_TEXTURE2D_LOD(_TempBlur, sampler_TempBlur, input.screenUV + float2(1, 1) * _TempBlur_TexelSize.xy * _BlurRange, 0);

return tex/5.0;

}

..........

#endif

五,完整代码

VolumetricLightPass.cs

using UnityEngine.Experimental.Rendering.RenderGraphModule;

using UnityEngine.Rendering;

using UnityEngine;

using UnityEngine.Experimental.Rendering;

public class VolumetricLightPass

{

static readonly ProfilingSampler sampler = new("VolumetricLightPass");

static readonly GlobalKeyword[] directionalFilterKeywords = {

GlobalKeyword.Create("_DIRECTIONAL_PCF3"),

GlobalKeyword.Create("_DIRECTIONAL_PCF5"),

GlobalKeyword.Create("_DIRECTIONAL_PCF7"),

};

static readonly GlobalKeyword[] otherFilterKeywords = {

GlobalKeyword.Create("_OTHER_PCF3"),

GlobalKeyword.Create("_OTHER_PCF5"),

GlobalKeyword.Create("_OTHER_PCF7"),

};

static readonly GlobalKeyword[] cascadeBlendKeywords = {

GlobalKeyword.Create("_CASCADE_BLEND_SOFT"),

GlobalKeyword.Create("_CASCADE_BLEND_DITHER"),

};

static int

ProjectionParams2 = Shader.PropertyToID("_ProjectionParams2"),

CameraViewTopLeftCorner = Shader.PropertyToID("_CameraViewTopLeftCorner"),

CameraViewXExtent = Shader.PropertyToID("_CameraViewXExtent"),

CameraViewYExtent = Shader.PropertyToID("_CameraViewYExtent"),

_SampleCount = Shader.PropertyToID("_SampleCount"),

_Density = Shader.PropertyToID("_Density"),

_MaxRayLength = Shader.PropertyToID("_MaxRayLength"),

_RandomNumber = Shader.PropertyToID("_RandomNumber"),

_BlurRange = Shader.PropertyToID("_BlurRange"),

_VolumetricLightTexture = Shader.PropertyToID("_VolumetricLightTexture"),

_TempBlur = Shader.PropertyToID("_TempBlur");

TextureHandle VolumetricLightAttachmentHandle,colorAttachment,TempBlur,TempBlur2;

VolumetricLightParams volumetricLightParams;

Camera camera;

ShadowSettings settings;

enum VolumetricLightPassEnum

{

VolumetricLightPass,

VolumetricLightBlur,

Final,

}

void SetKeywords(CommandBuffer buffer,GlobalKeyword[] keywords, int enabledIndex)

{

for (int i = 0; i < keywords.Length; i++)

{

buffer.SetKeyword(keywords[i], i == enabledIndex);

}

}

void SetCameraParams(CommandBuffer buffer)

{

Matrix4x4 view = camera.worldToCameraMatrix;

Matrix4x4 proj = camera.projectionMatrix;

// 将camera view space 的平移置为0,用来计算world space下相对于相机的vector

Matrix4x4 cview = view;

cview.SetColumn(3, new Vector4(0.0f, 0.0f, 0.0f, 1.0f));

Matrix4x4 cviewProj = proj * cview;

// 计算viewProj逆矩阵,即从裁剪空间变换到世界空间

Matrix4x4 cviewProjInv = cviewProj.inverse;

// 计算世界空间下,近平面四个角的坐标

var near = camera.nearClipPlane;

Vector4 topLeftCorner = cviewProjInv.MultiplyPoint(new Vector4(-1.0f, 1.0f, -1.0f, 1.0f));

Vector4 topRightCorner = cviewProjInv.MultiplyPoint(new Vector4(1.0f, 1.0f, -1.0f, 1.0f));

Vector4 bottomLeftCorner = cviewProjInv.MultiplyPoint(new Vector4(-1.0f, -1.0f, -1.0f, 1.0f));

// 计算相机近平面上方向向量

Vector4 cameraXExtent = topRightCorner - topLeftCorner;

Vector4 cameraYExtent = bottomLeftCorner - topLeftCorner;

//设置参数

buffer.SetGlobalVector(CameraViewTopLeftCorner, topLeftCorner);

buffer.SetGlobalVector(CameraViewXExtent, cameraXExtent);

buffer.SetGlobalVector(CameraViewYExtent, cameraYExtent);

buffer.SetGlobalVector(ProjectionParams2, new Vector4(1.0f / near, camera.transform.position.x, camera.transform.position.y, camera.transform.position.z));

}

void Render(RenderGraphContext context)

{

CommandBuffer buffer = context.cmd;

SetCameraParams(buffer);

SetKeywords(buffer,directionalFilterKeywords, (int)settings.directional.filter - 1);

SetKeywords(buffer, cascadeBlendKeywords, (int)settings.directional.cascadeBlend - 1);

SetKeywords(buffer, otherFilterKeywords, (int)settings.other.filter - 1);

buffer.SetGlobalInt(_SampleCount, volumetricLightParams.SampleCount);

buffer.SetGlobalFloat(_Density, volumetricLightParams.Density);

buffer.SetGlobalFloat(_MaxRayLength, volumetricLightParams.MaxRayLength);

buffer.SetGlobalFloat(_RandomNumber, Random.Range(0.0f, 1.0f));

buffer.SetRenderTarget(VolumetricLightAttachmentHandle, RenderBufferLoadAction.DontCare, RenderBufferStoreAction.Store);

buffer.DrawProcedural(Matrix4x4.identity, volumetricLightParams.VolumetricLightMaterial, (int)VolumetricLightPassEnum.VolumetricLightPass, MeshTopology.Triangles, 3);

//Blur

buffer.SetGlobalTexture(_TempBlur, VolumetricLightAttachmentHandle);

for (int i = 0; i < volumetricLightParams.BlurCount; i++)

{

buffer.SetGlobalFloat(_BlurRange, (i + 1) * volumetricLightParams.BlurRange);

buffer.SetRenderTarget(TempBlur2, RenderBufferLoadAction.DontCare, RenderBufferStoreAction.Store);

buffer.DrawProcedural(Matrix4x4.identity, volumetricLightParams.VolumetricLightMaterial, (int)VolumetricLightPassEnum.VolumetricLightBlur, MeshTopology.Triangles, 3);

buffer.SetGlobalTexture(_TempBlur, TempBlur2);

var temp = TempBlur;

TempBlur = TempBlur2;

TempBlur2 = temp;

}

buffer.SetGlobalTexture(_VolumetricLightTexture, TempBlur2);

//Final

buffer.SetRenderTarget(colorAttachment, RenderBufferLoadAction.DontCare, RenderBufferStoreAction.Store);

buffer.DrawProcedural(Matrix4x4.identity, volumetricLightParams.VolumetricLightMaterial, (int)VolumetricLightPassEnum.Final, MeshTopology.Triangles, 3);

context.renderContext.ExecuteCommandBuffer(buffer);

buffer.Clear();

}

public static void Record(RenderGraph renderGraph, Camera camera, VolumetricLightParams volumetricLightParams, Vector2Int BufferSize,

in CameraRendererTextures textures,

in LightResources lightData, ShadowSettings shadowSettings)

{

using RenderGraphBuilder builder = renderGraph.AddRenderPass(sampler.name, out VolumetricLightPass pass, sampler);

var desc = new TextureDesc(BufferSize / (int)volumetricLightParams.DownSmaple)

{

colorFormat = GraphicsFormat.R8G8B8A8_SRGB,

name = "VolumetricLightPass"

};

pass.VolumetricLightAttachmentHandle = builder.CreateTransientTexture(desc);

desc.name = "TempBlur";

pass.TempBlur = builder.CreateTransientTexture(desc);

desc.name = "TempBlur2";

pass.TempBlur2 = builder.CreateTransientTexture(desc);

pass.volumetricLightParams = volumetricLightParams;

pass.camera = camera;

pass.settings = shadowSettings;

builder.ReadTexture(textures.depthCopy);

pass.colorAttachment = builder.ReadWriteTexture(textures.colorAttachment);

builder.ReadComputeBuffer(lightData.directionalLightDataBuffer);

builder.ReadComputeBuffer(lightData.otherLightDataBuffer);

builder.ReadTexture(lightData.shadowResources.directionalAtlas);

builder.ReadTexture(lightData.shadowResources.otherAtlas);

builder.ReadComputeBuffer(lightData.shadowResources.directionalShadowCascadesBuffer);

builder.ReadComputeBuffer(lightData.shadowResources.directionalShadowMatricesBuffer);

builder.ReadComputeBuffer(lightData.shadowResources.otherShadowDataBuffer);

builder.SetRenderFunc<VolumetricLightPass>(

static (pass, context) => pass.Render(context));

}

}

VolumetricLight.shader

Shader "Hidden/Custom RP/VolumetricLight"

{

SubShader{

HLSLINCLUDE

#include "../ShaderLibrary/Common.hlsl"

#include "../ShaderLibrary/VolumetricLight.hlsl"

ENDHLSL

Pass{

Name "VolumetricLight"

Cull Off

ZTest Always

ZWrite Off

HLSLPROGRAM

#pragma multi_compile _ _OTHER_PCF3 _OTHER_PCF5 _OTHER_PCF7

#pragma multi_compile _ _DIRECTIONAL_PCF3 _DIRECTIONAL_PCF5 _DIRECTIONAL_PCF7

#pragma vertex DefaultPassVertex

#pragma fragment VolumetricLightFragment

ENDHLSL

}

Pass

{

ZTest Always

ZWrite Off

Cull Off

Name "VolumetricLight Blur"

HLSLPROGRAM

#pragma vertex DefaultPassVertex

#pragma fragment VolumetricLightBlur

ENDHLSL

}

Pass

{

ZTest Always

ZWrite Off

Cull Off

Blend One SrcAlpha, Zero One

Name "Final"

HLSLPROGRAM

#pragma vertex DefaultPassVertex

#pragma fragment frag_final

ENDHLSL

}

}

}VolumetricLight.hlsl

#ifndef VOLUMETRICLIGHT_INCLUDED

#define VOLUMETRICLIGHT_INCLUDED

#include "Surface.hlsl"

#include "Shadows.hlsl"

#include "Light.hlsl"

#define random(seed) sin(seed * 641.5467987313875 + 1.943856175)

TEXTURE2D(_VolumetricLightTexture);

SAMPLER(sampler_VolumetricLightTexture);

TEXTURE2D(_TempBlur);

SAMPLER(sampler_TempBlur);

float4 _TempBlur_TexelSize;

int _SampleCount;

float _Density;

float _MaxRayLength;

float _RandomNumber;

float _BlurRange;

struct Varyings {

float4 positionCS_SS : SV_POSITION;

float2 screenUV : VAR_SCREEN_UV;

};

Varyings DefaultPassVertex(uint vertexID : SV_VertexID) {

Varyings output;

output.positionCS_SS = float4(

vertexID <= 1 ? -1.0 : 3.0,

vertexID == 1 ? 3.0 : -1.0,

0.0, 1.0

);

output.screenUV = float2(

vertexID <= 1 ? 0.0 : 2.0,

vertexID == 1 ? 2.0 : 0.0

);

if (_ProjectionParams.x < 0.0) {

output.screenUV.y = 1.0 - output.screenUV.y;

}

return output;

}

float GetDirLightAttenuation(DirectionalLightData Lightdata,float3 wpos)

{

float atten = 1;

int i;

for (i = 0; i < _CascadeCount; i++) {

DirectionalShadowCascade cascade = _DirectionalShadowCascades[i];

float distanceSqr = DistanceSquared(wpos, cascade.cullingSphere.xyz);

if (distanceSqr < cascade.cullingSphere.w) {

break;

}

}

float3 positionSTS = mul(

_DirectionalShadowMatrices[Lightdata.shadowData.y + i],

float4(wpos, 1.0)

).xyz;

atten = FilterDirectionalShadow(positionSTS);

return atten;

}

float GetOtherLightAttenuation(OtherLightData Lightdata, float3 wpos)

{

float atten = 0.1;

float tileIndex = Lightdata.shadowData.y;

float3 position = Lightdata.position.xyz;

float3 ray = position - wpos;

float3 ratDir = normalize(ray);

//IsPoint

if (Lightdata.shadowData.z == 1.0) {

float faceOffset = CubeMapFaceID(-ratDir);

tileIndex += faceOffset;

}

OtherShadowBufferData data = _OtherShadowData[tileIndex];

float distanceSqr = max(dot(ray, ray), 0.00001);

float rangeAttenuation = Square2(saturate(1.0 - Square2(distanceSqr * Lightdata.position.w)));

float3 spotDirection = Lightdata.directionAndMask.xyz;

float spotAttenuation = Square2(saturate(dot(spotDirection, ratDir) * Lightdata.spotAngle.x + Lightdata.spotAngle.y));

float4 positionSTS = mul(data.shadowMatrix, float4(wpos, 1.0));

atten = FilterOtherShadow(positionSTS.xyz / positionSTS.w, data.tileData.xyz) * spotAttenuation * rangeAttenuation / distanceSqr;

return atten;

}

half4 RayMarch(float3 worldPos,float2 uv)

{

float3 startPos = _WorldSpaceCameraPos; //摄像机上的世界坐标

float3 dir = normalize(worldPos - startPos); //视线方向

float rayLength = length(worldPos - startPos); //视线长度

rayLength = min(rayLength, _MaxRayLength); //限制最大步进长度,_MaxRayLength这里设置为20

float3 final = startPos + dir * rayLength; //定义步进结束点

float2 step = 1.0 / _SampleCount; //定义单次插值大小,_SampleCount为步进次数

step.y *= 0.4;

float seed = random((_ScreenParams.y * uv.y + uv.x) * _ScreenParams.x + _RandomNumber);

half3 intensity = 0; //累计光强

for (int j = 0; j < GetDirectionalLightCount(); j++) {

DirectionalLightData Lightdata = _DirectionalLightData[j];

half3 tempintensity = 0;

for (float i = 0; i < 1; i += step) //光线步进

{

seed = random(seed);

float3 currentPosition = lerp(startPos, final, i + seed * step.y); //当前世界坐标

float atten = GetDirLightAttenuation(Lightdata, currentPosition) * _Density; //阴影采样,_Density为强度因子

float3 light = atten;

tempintensity += light;

}

intensity += (tempintensity * Lightdata.color /_SampleCount) ;

}

for (int j = 0; j < GetOtherLightCount(); j++) {

OtherLightData Lightdata = _OtherLightData[j];

half3 tempintensity = 0;

for (float i = 0; i < 1; i += step) //光线步进

{

seed = random(seed);

float3 currentPosition = lerp(startPos, final, i + seed * step.y);

float atten = GetOtherLightAttenuation(Lightdata, currentPosition) * _Density;

float3 light = atten;

tempintensity += light;

}

intensity += (tempintensity * Lightdata.color / _SampleCount);

}

int lightCount = GetDirectionalLightCount() + GetOtherLightCount();

intensity /= lightCount;

return half4(intensity, 1); //查看结果

}

half4 VolumetricLightFragment(Varyings input) : SV_TARGET{

//采样深度

float depth = SAMPLE_DEPTH_TEXTURE_LOD(_CameraDepthTexture, sampler_point_clamp, input.screenUV, 0);

depth = IsOrthographicCamera() ? OrthographicDepthBufferToLinear(depth) : LinearEyeDepth(depth, _ZBufferParams);

float3 wpos = _WorldSpaceCameraPos + ReconstructViewPos(input.screenUV, depth);

return RayMarch(wpos, input.screenUV);

}

half4 VolumetricLightBlur(Varyings input) : SV_TARGET{

float4 tex = SAMPLE_TEXTURE2D_LOD(_TempBlur, sampler_TempBlur, input.screenUV, 0);

tex += SAMPLE_TEXTURE2D_LOD(_TempBlur, sampler_TempBlur, input.screenUV + float2(-1, -1) * _TempBlur_TexelSize.xy * _BlurRange, 0);

tex += SAMPLE_TEXTURE2D_LOD(_TempBlur, sampler_TempBlur, input.screenUV + float2(1, -1) * _TempBlur_TexelSize.xy * _BlurRange, 0);

tex += SAMPLE_TEXTURE2D_LOD(_TempBlur, sampler_TempBlur, input.screenUV + float2(-1, 1) * _TempBlur_TexelSize.xy * _BlurRange, 0);

tex += SAMPLE_TEXTURE2D_LOD(_TempBlur, sampler_TempBlur, input.screenUV + float2(1, 1) * _TempBlur_TexelSize.xy * _BlurRange, 0);

return tex/5.0;

}

half4 frag_final(Varyings input) : SV_TARGET{

float4 b = SAMPLE_TEXTURE2D_LOD(_VolumetricLightTexture, sampler_VolumetricLightTexture, input.screenUV, 0);

return b;

}

#endif更多推荐

已为社区贡献1条内容

已为社区贡献1条内容

所有评论(0)